When it comes to Cluster management, we may need many slaves (machines) which will run our tasks/applications. Similarly, in our project, we do have Apache Mesos Cluster which nearly runs around 200 EC2 instances in production itself and for real-time data processing, we use Apache Storm cluster which has supervisors machine count around 150. All these machines rather than running on on-demand we run on Spotfleet in AWS.

Now the question comes what is spotfleet? To understand SpotFleet first look what is Spot Instances.

Spot Instances

AWS must maintain a huge infrastructure with a lot of unused capacity. This unused capacity is basically the available spot instance pool – AWS lets users bid for these unused resources (usually on a significantly lower price than the on-demand price). So we can get AWS ec2 boxes at a much lower price as compared to their on-demand price.

SpotFleet

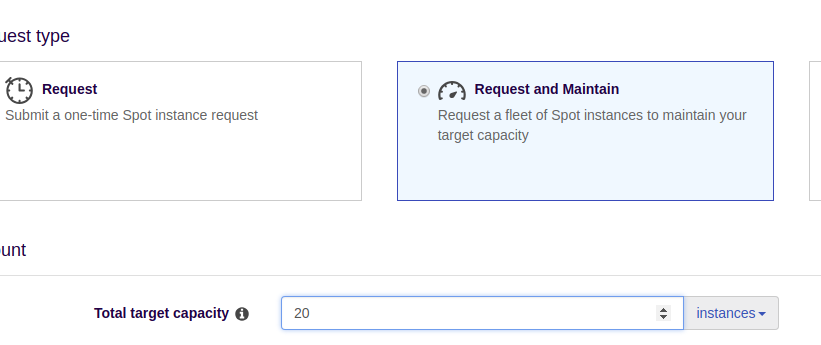

A Spot Fleet is a collection, or fleet, of Spot Instances, and optionally On-Demand Instances. The Spot Fleet attempts to launch the number of Spot Instances and On-Demand Instances to meet the target capacity that you specified in the Spot Fleet request.

Above is the screenshot of AWS SpotFleet, in which we are launching 20 instances.

Spot instance lifecycle:

- User submits a bid to run the desired number of EC2 instances of a particular type. The bid includes the price that the user is willing to pay to use the instance.

- If the bid price exceeds the current spot price (that is determined by AWS based on current supply and demand) the instances are started.

- If the current spot price rises above the bid price or there are no available capacity, the spot instance is interrupted and reclaimed by AWS. 2 minutes before the interruption the internal metadata endpoint on the instance is updated with the termination info.

Spot instance termination notice

The Termination Notice is accessible to code running on the instance via the instance’s metadata at http://169.254.169.254/latest/meta-data/spot/termination-time. This field becomes available when the instance has been marked for termination and will contain the time when a shutdown signal will be sent to the instance’s operating system.

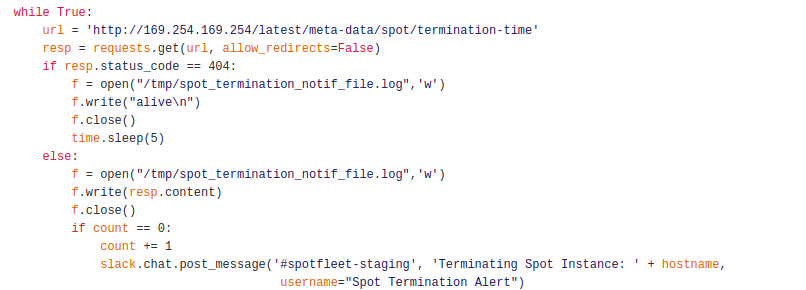

The most common way discussed to detect that the Two Minute Warning has been issued is by polling the instance metadata every few seconds. This is available on the instance at:

http://169.254.169.254/latest/meta-data/spot/termination-time

This field will normally return a 404 HTTP status code but once the two-minute warning has been issued, it will return the time that shutdown will actually occur.

This can only be accessed from the instance itself, so you have to put this code on every spot instance that you are running. A simple curl to that address will return the value. You might be thinking to set up a cron job, but do not go down that path. The smallest interval you can run something with cron is once a minute. If you miss it by a second or two you are not going to detect it until the next minute and you lose half of the time available to you.

Sample Snippet:

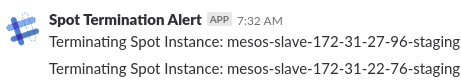

Below is alert that we receive in SLACK.

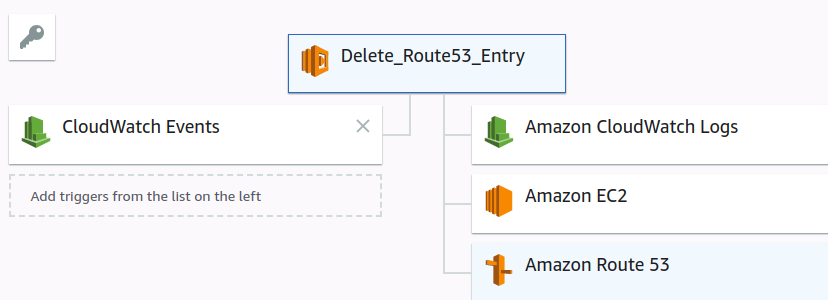

Delete Route53 Entries

We do create DNS entries for all our mesos and storm boxes, but whenever those instances get deleted their DNS entries still remain there, which cause lots of entries under Route53 which are of no use. So, we come up with an idea why not have a lambda function that will be triggered whenever a spotfleet instance got terminated.

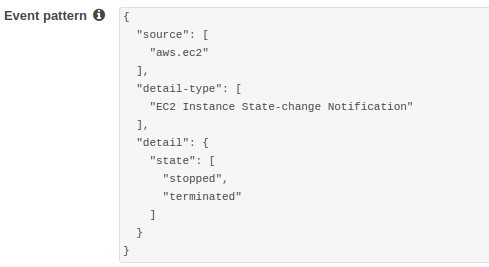

Created a cloudwatch rule

So, whenever an instance got terminated this rule run and it triggers a Lambda function, which deletes the route53 of the terminated instance.

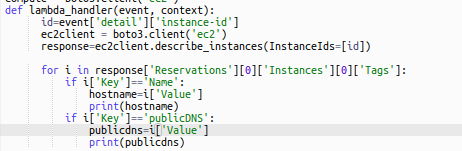

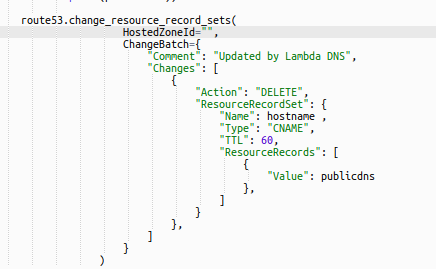

Below is the code snippet:

For each mesos and storm Instance, we do have Tagging and that tagging we use to destroy entries

Conclusion:

From Spot instances Termination we get a two-minute warning that they are about to be reclaimed by AWS. These are precious moments where we can have the instance deregister from accepting any new work, finish up any work in progress. Apart from these, using lambda function we can remove stale route53 entries.