Powered with blockchain technology, smart contracts have become an important factor concerning business transparency. It eliminates the necessity for intermediary services like brokers and agents to facilitate a transaction, is less time-consuming, and enables agent neutrality and automation in signing deals. So, it is essential to do a thorough analysis of smart contracts security.

The steady adoption of smart contracts on the Ethereum Blockchain has enabled millions of contracts holding numerous dollars in digital currencies, and tiny mistakes during the development of smart contracts on immutable blockchain have caused substantial losses and brought danger for future incidents. Hence, today the secure development of smart contracts is a crucial topic and a variety of other attacks and incidents associated with vulnerable smart contracts could have been avoided.

Smart Contract Attacks

The following is the list of known attacks one should be aware of and defend against while writing a smart contract.

Reentrancy

It occurs when a function makes an external call to another untrusted contract before it resolves the effects that should have been resolved. This can have unexpected effects. In this attack, the attack surface is high in terms of fund loss. The attacker can simply call the function of your smart contract and then re-enter the same piece of code and eventually drain the funds.

Real-World Impact: On 17-Jun-2016, The DAO (Decentralized Autonomous Organization) was one of the major attacks where reentrancy played a major role. As an overview, the attacker was analyzing DAO.sol, where he noticed that there is a function that updates user balances and totals at the end. Later, he found a way to recursively call the function before it finishes its execution so that he can move as many funds as he wants. This resulted in the transfer of around one-third (3.6 million) of ether that had been committed to The DAO.

Access Control

Access control is a very common issue. This problem occurs when someone tries to access functionality in the smart contract to which he was not authorized. To access external contracts functionality, the method or property must be set as external or public. While insecure visibility settings give attackers straightforward ways to access a contract’s private values or logic, access control bypasses are sometimes more subtle.

Solidity has a global variable, tx.origin (deprecated), which returns the address of the account that originally sent the call. Using this variable for authentication leaves the contract susceptible to a phishing-like attack.

Real-World Impact: Rubixi’s (Ponzi scheme) Fees were stolen because the constructor function had an incorrect name (used DynamicPyramid instead of Rubixi), allowing anyone to become the owner.

Arithmetic

The data types available in solidity to store an integer can only hold the numbers in a specific range. An over/underflow occurs when an operation is performed that needs a fixed size variable to store a number (or piece of data) that’s outside the range of the variable’s data type. A uint8 for instance can only store numbers within the range [0,255]. Trying to store 256 into a uint8 will end in 0. This can be exploited if user input is unchecked and calculations are performed which result in numbers that lie outside the range of the data type that stores them.

Real-World Impact: The 4chan group who built Proof of Weak Hands Coin (PoWHC, a Ponzi scheme) lost $800k overnight because of over/underflow issues. The problem was in PoWH’s implementation of ERC-20. The attacker exploited the underflow issue to gain an exceedingly large balance of PoWH Coins.

Similarly, on 4/22/2018, an unusual BEC token transaction was recorded. In this particular transaction, someone transferred an extremely large amount of BEC tokens. Later, the analysis proved it to be a classic integer overflow issue.

Unchecked Low-Level Calls

In solidity, you can either use low-level calls such as: address.call(), address.callcode(), address.delegatecall(), and address.send(); or you can use contract calls such as: ExternalContract.doSomething(). Low-level calls will never throw an exception, rather will return false if they encounter an exception, whereas contract calls will automatically throw.

If the return value of a low-level call is not checked, the execution resumes despite the function call throwing errors. This can cause unexpected behavior and break the program logic. A failed call can even be caused by an attacker, who may be able to further exploit the application.

Real-World Impact: In the King of the Ether, an unchecked failed send() caused some monarch compensation payments and over/underpayment refunds to fail to be sent.

Denial of Service

Typically, there are three types of DoS attacks that can happen on a smart contract.

One of them would be an Unexpected Revert, where you were not expecting a transaction to revert, but it is reverting. Despite writing all kinds of functionalities in your Smart Contracts, which are imitating your business requirements, there is still a scenario where a different type of a user, which is not an individual Ethereum address, but another malicious Smart Contract can play with your system and not allow you to completely execute the algorithm, which you have written.

The next one is related to Block Gas Limit. The Block Gas Limit is the maximum amount of gas that can go through in a single block. The more complexity, the more competition that your transaction has, the higher the gas that is required. So, there are chances where some heavy competition can result in your transactions going out of gas. This is often the case in systems that loop over an array or mapping that can be enlarged by users at little cost.

Last is Block Stuffing. So, in this attack, the attacker can place a transaction and then blocks the entire Ethereum network with a lot of transactions so that nobody else can participate in the Smart Contract. To ensure their transactions are being processed by miners, the attacker can prefer to pay higher transaction fees. By controlling the quantity of gas consumed by their transactions, the attacker can influence the number of transactions that get to be mined and included within the block.

Real-World Impact: At one time, GovenMental (an old Ponzi scheme) had accumulated 1100 ether. This Reddit Post describes how the contract required the deletion of a large mapping to withdraw the ether. The deletion of this mapping had a gas cost that exceeded the block gas limit at the time and thus was not possible to withdraw the 1100 ether.

Code Injection via delegatecall

There exists a special variant of a message call, named delegatecall. The DELEGATECALL opcode is just like the standard message call, except that the code executed at the targeted address is run in the context of the calling contract along with the very fact that msg.sender and msg.value remain unchanged. This allows a smart contract to dynamically load code from a different address at runtime. Storage, current address, and balance still refer to the calling contract. Calling into untrusted contracts is extremely dangerous because the code at the target address can change any storage values of the caller and has full control over the caller’s balance.

Real-World Impact: About $31M worth of ether was stolen in the second parity multi-sig attack from primarily 3 wallets. A method initWallet() which was supposed to initialize the wallet was not given proper visibility and was left public. This allowed the attacker to call these functions on deployed contracts, resetting the ownership to the attacker’s address. Then the attacker called the kill() method to self-destruct the contract. As a result, all Parity multi-sig wallets became useless and all funds or tokens in the Parity multi-sig were frozen forever.

Signature Replay

The basic idea of signature replay is that you can use the same signature to execute a transaction multiple times. The attacker can listen to the communication channel and make a copy of the signed message, which is possible if the communication channel is accessible. Then the attacker can resubmit it to the message receiver. The receiver won’t be able to tell the difference unless there is something in the message which identifies whether this message is sent before or not. Typically a cryptographic signature only identifies the signer and the message integrity and nothing more. There is no information on whether the signature is already used or the message has been sent several times.

Time Manipulation

The basic idea of this exploit is that miners can manipulate block.timestamp with some constraints. The constraints are that the time must be after the previous block timestamp and it cannot be too far in the future. Miners have the ability to adjust timestamps slightly which can prove to be quite dangerous if block timestamps are used incorrectly in smart contracts.

Real-World Impact: GovernMental (a Ponzi scheme, also discussed in the DoS attack) was also exposed to timestamp attack. In the scheme, the player who joined the round at last and was there for at least a minute got paid. Thus, a miner, who’s a player, could adjust the timestamp (to a future time, to make it look like a minute had elapsed) to make it appear that the player was the last to join for over a minute (even though this is not true in reality).

Front Running

Front-running is a course of action where someone benefits from early access to market information about upcoming transactions and trades, typically because of a privileged position along with the transmission of this information.

Every new blockchain transaction first relays around the network, then it’s selected by a miner and put into a valid block, and finally, the block is well-enough incorporated in the blockchain that it is unlikely to be changed. Front-running is an attack where a malicious node observes a transaction after it is broadcast but before it is finalized, and attempts to have its etransaction confirmed before or instead of the observed transaction.

Real-World Impact: Bancor is an ICO that spectacularly raised over $150M in funding over a few minutes. Researchers at Cornell revealed that Bancor was vulnerable to front-running. They pointed out that miners would be able to front-run any transactions on Bancor since miners are free to re-order transactions within a block they’ve mined.

Other Vulnerabilities

The Smart Contract Weakness Classification Registry offers a complete and up-to-date catalog of known smart contract vulnerabilities and anti-patterns along with real-world examples. Browsing the registry is a good way of keeping up-to-date with the latest attacks.

All these vulnerabilities suggest that despite their potential, repeated security concerns have shaken the trust in handling billions of amounts by smart contracts. All the security issues should be arrested before deploying the contract otherwise the cost of vulnerability is much higher. Another attack like DAO that almost brought down the world’s second-largest blockchain will result in a catastrophe.

In the next section, will discuss Manticore. It is based on the symbolic execution of smart contracts for analyzing and detecting various types of vulnerabilities. A symbolic execution tool tries to explore all possible paths of your contract and generate reproducible input for each case. Symbolic execution has a remarkable potential for programmatically detecting broad classes of security vulnerabilities in modern software.

Manticore

Manticore is a symbolic execution tool for the analysis of smart contracts and binaries. It enables the exploration of a large number of execution paths by replacing program inputs with symbolic parameters and studying the conditions on these parameters that determine the execution of each element of the program. It’s pure Python with minimal dependencies.

According to the official documentation, these are the features of Manticore:

- Program Exploration: Manticore can execute a program with symbolic inputs and explore all the possible states it can

- Input Generation: Manticore can automatically produce concrete inputs that result in a given

program state.

- Error Discovery: Manticore can detect crashes and other failure cases in binaries and smart contracts.

- Instrumentation: Manticore provides fine-grained control of state exploration via event callbacks and instruction

- Programmatic Interface: Manticore exposes programmatic access to its analysis engine via a Python

Not only the Ethereum Smart Contracts but it can also analyze Linux ELF binaries (x86, x86_64, aarch64, and ARMv7) and WASM Modules. Manticore is notably slow because it goes through different sections of code with a variety of attack scenarios but the end result is worth the wait.

In this blog, our main focus is on Manticore’s CLI tool, although it also offers an expressive and scriptable Python API for custom analyses and application-specific optimizations. Anyone with experience in exploitation or reversing can use the API to create specialized binary analysis tools, and answer a range of questions, such as:

“What is a program input that will cause the execution of this code?” “Can the program reach code X at runtime?”

“At point X in execution, is it possible for variable Y to be a specified value?”

“Is user input ever used as a parameter to libc function X?” “How many times does the program execute function X?”

“How many instructions does the program execute if given input X?”

Please follow this link for installation.

Detecting Vulnerabilities in Smart Contracts

Manticore comes with an easy to use Command Line Tool (CLI) which allows you to quickly generate new program test cases with symbolic execution. It’s capable of input generation, crash discovery, execution tracing, etc.

Let’s take VulnerableToken.sol as an example.

In this contract, users can buy a token by calling the fund method and destroy the token by calling the burn method. A user can take control of the contract by calling the takeOwnership method, which requires the user to have more tokens than the current owner.

Since the fund method automatically grants more tokens to the current owner if the purchased tokens are less than the owner’s balance, it should only be possible to take over the contract by buying more tokens than the current owner has in a single transaction.

Manticore by default will create an attacker account with a balance of 1000, which won’t be enough to surpass the owners’ starting balance of 1 million tokens. It should be impossible to

take over the contract. However, there is a bug in the burn function, if an attacker tries to burn more tokens than they currently own, the possibility is that they will overflow their balance and gain an absurd number of tokens. Let’s run Manticore on this contract and see if it can find the bug.

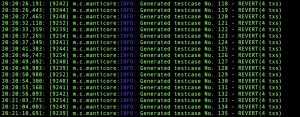

Use the below command to start the symbolic execution.

$manticore VulnerableToken.sol

Once started, it will automatically generate various test cases with detailed output.

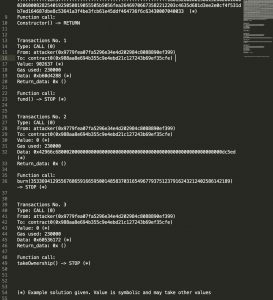

All the generated result files will be stored in a separate folder. We will mainly focus on user_xxxxxxxx.tx and global.findings files in this blog. Details about each file can be found on this link.

The user_xxxxxxxx.tx file contains the details of the transactions that happened in a single test case and global.findings has the results of any detectors that were triggered during execution.

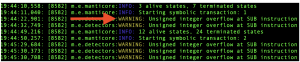

We can see in the CLI, manticores overflow detector first warns an overflow after the second transaction.

It must be calling the fund method immediately followed by burn. By looking through the resulting test cases, we can find the test case which allowed the attacker to take ownership of the contract.

In this test case, after the owner creates the contract, the attacker buys a symbolic number of tokens (902637). Then it calls the burn function with a massive symbolic argument, which overflows the balance and grants them a massive number of tokens. After that, they are able to call takeOwnership and have it stop successfully instead of throwing a revert.

As we have just seen, Manticore was able to symbolically evaluate sequences of the transaction, including one that revealed the bug in the contract

Manticore Detectors

Manticore cli comes with a pack of default detectors turned on (ex. Integer Overflow). These will print warnings as soon as they suspect that a state behaves in a certain way and at the end, this is going to be collapsed at global.findings.

If there is nothing printed on global.findings, we can say that no “bug” has been detected. It means that the implemented detectors did not find any contract path that matches their specific property. You can check the obtained coverage as a measure of exploration completeness.

Manticore will make a great effort to exercise all possible contract paths, though there are certain limitations (dynamic of great size arguments, call data size, number of symbolic transactions, etc).

In the output folder, you will find the transaction trace for all different contract states that manticore found. You can see account balances and all that to check manually that nothing really bad happened.

The findings and detected things depend on the default detectors enabled. The best part of the analysis is all the high coverage test cases you’ll find in the output folder. Ideally, you should check all of it to see if you can break any contract invariant (whatever that is for you) at any of them.

Below is the list of detectors available and activated by default by Manticore.

| Detector | Description |

| DetectEnvInstruction | Detect the usage of instructions that query environmental/block information:BLOCKHASH, COINBASE, TIMESTAMP, NUMBER, DIFFICULTY, GASLIMIT, ORIGIN, GASPRICE

Sometimes environmental information can be manipulated. Contracts should avoid using it. Unless in special situations. |

| DetectSuicidal | Reachable self destruct instructions |

| DetectExternalCallAndLeak | Reachable external call or ether leak to sender or arbitrary address |

| DetectInvalid | Invalid instruction detection |

| DetectReentrancySimple | Simple detector for reentrancy bugs. Alert if contract changes the state of storage (does a |

| write) after a call with >2300 gas to a user controlled/symbolic external address or the msg.sender address. | |

| DetectReentrancyAdvanced | Detector for reentrancy bugs. Given an optional concrete list of attacker addresses, warn on the following conditions.1) A successful call to an attacker address (address in attacker list), or any human account address (if no list is given). With enough gas (>2300).

2) A SSTORE after the execution of the CALL. 3) The storage slot of the SSTORE must be used in some path to control flow |

| DetectIntegerOverflow | Detects potential overflow and underflow conditions on ADD and SUB instructions. |

| DetectUnusedRetVal | Detects unused return value from internal transactions |

| DetectDelegatecall | Detects DELEGATECALLs to controlled addresses and or with controlled function id. This detector finds and reports on any delegatecall instruction any the following propositions are hold:* the destination address can be controlled by the caller

* the first 4 bytes of the calldata are controlled by the caller |

| DetectUninitializedMemory | Detects uses of uninitialized memory |

| DetectUninitializedStorage | Detects uses of uninitialized storage |

| DetectRaceCondition | Detects possible transaction race conditions (transaction order dependencies). The RaceCondition detector might not work properly for contracts that have only a fallback function. |

| DetectManipulableBalance | Detects the use of manipulable balance in strict compare. |

Conclusion

Manticore is a great tool to do static analysis of smart contracts, it’s very flexible and covers a variety of bug types. These bugs, if not detected, could have resulted in a significant loss. Due to manticores’ way of work, it takes a long time to analyze smart contracts and in some cases it even times-out. Also, it consumes significant memory space as well in the system. Apart from these two issues, it’s an excellent tool to find bugs that gets even better with the scriptable Python APIs.

References

- https://blog.trailofbits.com/2017/04/27/manticore-symbolic-execution-for-humans

- https://github.com/trailofbits/manticore/wiki

- https://github.com/runtimeverification/verified-smart-contracts/wiki/List-of-Security

- https://github.com/trailofbits/manticore

- https://github.com/trailofbits/manticore/issues/1435

- https://blog.sigmaprime.io/solidity-security.html

- https://www.dasp.co

- https://consensys.github.io/smart-contract-best-practices/known_attacks/

- https://github.com/trailofbits/manticore/blob/master/manticore/ethereum/detectors.py

- https://dl.acm.org/doi/10.1145/3282373.3282419

- https://somish.com/all-about-smart-contract-auditing-denial-of-service-attacks-on-smart

- https://medium.com/@zhongqiangc/smart-contract-stuffing-fomo3d-42cb1d11ab03

- https://consensys.net/diligence/blog/2019/02/taxonomy-of-front-running-attacks

- https://swcregistry.io/

- https://www.cyberpunk.rs/symbolic-execution-tool-manticore

- https://medium.com/cryptronics/signature-replay-vulnerabilities-in-smart-contracts